A Medida de Informação de Shannon: Entropia

DOI:

10.47976/RBHM2021v20n4145-72Palabras clave:

Entropia, Medidas de informação, Axiomas, Equação funcional, Teoria de ShannonResumen

Logo após Claude E. Shannon, em 1948, ter publicado o artigo A Mathematical Theory of Communication, diversas áreas se valeram de seus escritos, principalmente por ele ter desenvolvido uma fórmula para “medir informação” em seu modelo matemático de comunicação, denominando-a entropia. Shannon optou pela justificativa operacional da existência de sua fórmula de entropia. Por conseguinte, houve uma expansão das áreas de investigações matemáticas sobre as possíveis caracterizações de medidas de informação. Neste texto o objetivo é focar nas estruturas matemáticas que fundamentam o conceito de medidas de informação. Estima-se com isso, no sentido didático, que haja esclarecimentos com relação às múltiplas leituras do conceito de entropia.

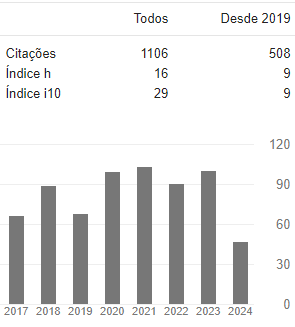

Descargas

Métricas

Citas

ACZÉL, J. Lectures on functional equations and their applications. New York, NY: Academic Press, 1966.

ACZÉL, J. On different characterizations of entropies. In: BEHARA, M.; KRICKEBERG, K.; WOLFOWITZ, J. (Eds.). Probability and Information Theory. Lecture Notes in Mathematics, v. 89. Springer, Berlin, Heidelberg, p. 1-6, 1969.

ACZÉL, J. Measuring information beyond communication theory: some probably useful and some almost certainly useless generalizations. Information Processing & Management, v. 20, n. 3, p. 383-395, 1984a.

ACZÉL, J. Measuring information beyond communication theory: why some generalized information measures may be useful, others not. Aequationes Mathematicae, v. 27, p. 1-19, 1984b.

ACZÉL, J. Characterizing information measures: approaching the end of an era. In: BOUCHON, B.; YAGER, R. R. (Eds.). International Conference on Information Processing and Management of Uncertainty in Knowledge-Based Systems. Springer, Berlin, Heidelberg, p. 357-384, 1986.

ACZÉL, J.; DARÓCZY, Z. On measures of information and their characterizations. New York, NY: Academic Press, v. 115, 1975.

ACZÉL, J.; DHOMBRES, J. Functional equations in several variables. Cambridge, UK: Cambridge University Press, 1989.

ACZÉL, J.; FORTE, B.; NG, C. T. Why the Shannon and Hartley entropies are ‘natural’. Advances in Applied Probability, v. 6, n. 1, p. 131-146, 1974.

AFTAB, O.; CHEUNG, P.; KIM, A.; THAKKAR, S.; YEDDANAPUDI, N. Information theory and the digital age: the structure of engineering revolutions. Cambridge, MA: Massachusetts Institute of Technology, 2001. Disponível em: http://web.mit.edu/6.933/www/Fall2001/Shannon2.pdf, acesso em 22/05/2021, às 17:57.

AGRELL, E.; ALVARADO, A.; KSCHISCHANG, F. R. Implications of information theory in optical fibre communications. Philosophical Transactions of the Royal Society A: Mathematical, Physical and Engineering Sciences, A.374: 20140438, disponível em https://doi.org/10.1098/rsta.2014.0438, acesso em 02/12/2020, às 10h00.

ALEXANDER, A. Infinitesimal: a teoria matemática que mudou o mundo. Rio de Janeiro, RJ: Jorge Zahar Editor Ltda., 2014.

ASH, R. B. Information theory. Dover Publications, NewYork, 1990. (Originalmente publicada pela Interscience Publishers, New York, 1965).

BAR-HILLEL, Y.; CARNAP, R. Semantic information. The British Journal for the Philosophy of Science, v. 4, n. 14, p. 147-157, 1953.

BEN-NAIM, A. Discover entropy and the second law of thermodynamics: a playful way of discovering a law of nature. Singapore: World Scientific Publishing Company, 2010.

BOTTAZZINI, U. The higher calculus: a history of real and complex analysis from Euler to Weierstrass. New York, NY: Springer-Verlag, 1986.

BRADLEY, R. E.; SANDIFER, C. E. Cauchy’s cours d’analyse: an annotated translation. New York, NY: Springer-Verlag, 2009.

BRESSOUD, D. M. A radical approach to real analysis. Washington, DC: Mathematical Association of America, v. 2, 2007.

BRILLOUIN, L. Science and information theory. New York, NY: Academic Press Inc., 1956.

CHURCH, A. A note on the Entscheidungs problem. The Journal of Symbolic Logic, v. 1, n. 1, p. 40-41, 1936.

CLAUSIUS, R. Über verschiedene für die anwendung bequeme formen der hauptgleichungen der mechanischen wärmetheorie. Annalen der Physik, v. 201, n. 7, p. 353-400, 1865.

CLAUSIUS, R. Abhandlungen über die mechanische wärmetheorie. Braunschweig, Germany: Friedrich Vieweg Und Sohn, 1867.

COVER, T. M. Broadcast channels. IEEE Transactions on Information Theory, v. 18, n. 1, p. 2-14, 1972.

COVER, T. M.; THOMAS, J. A. Elements of information theory. Hoboken, NJ: WileyInterscience, v. 2, 2006.

CSISZÁR, I. Axiomatic characterizations of information measures. Entropy, v. 10, n. 3, p. 261-273, p. 269, 2008.

CSISZÁR, I.; KÖRNER, J. Information theory: coding theorems for discrete memoryless systems. Cambridge, UK: Cambridge University Press, 2011.

CUTLAND, N. J. Computability: an introduction to recursive function theory. Cambridge, UK: Cambridge University Press, 1980.

DARÓCZY, Z. Generalized information functions. Information and Control, v. 16, n. 1, p. 36-51, 1970.

DAVIS, M. Computability and unsolvability. Mineola, NY: Dover Publications, 1985.

DAVIS, M.; SIGAL, R.; WEYUKER, E. J. Computability, complexity, and languages: fundamentals of theoretical computer science. Amsterdam, Netherlands: Elsevier, North Holland Publishing Co., v. 2, 1994.

DOOB, J. L. Review of C.E. Shannon’s Mathematical Theory of Communication. Mathematical Reviews, v. 10, p. 133, 1949.

EBANKS, B.; SAHOO, P.; SANDER, W. Characterizations of information measures. Singapore: World Scientific Publishing Company, 1998.

ELLERSICK, F. W. A conversation with Claude Shannon. IEEE Communications Magazine, v. 22, n. 5, p. 123-126, 1984.

FADDEEV, D. K. On the concept of entropy of a finite probability scheme. Originalmente publicado em russo em Uspekhi Matematicheskikh Nauk, v. 11, n. 1, p. 227-231, 1956.

FEINSTEIN, A. Foundations of information theory. New York, NY: McGraw-Hill Book Company Inc., 1958.

FISHER, R. A. Theory of statistical estimation. Proceedings of Cambridge Philosophy Society, v. 22, n. 5, p. 700-725, 1925.

GRABINER, J. V. The origin of Cauchy’s rigorous calculus. New York, NY: Dover Publications Inc., 1981.

GRATTAN-GUINNESS, I. Joseph Fourier and the revolution in mathematical physics. IMA Journal of Applied Mathematics, v. 5, n. 2, p. 230-253, 1969.

HAIRER, E.; WANNER, G. Analysis by its history. New York, NY: Springer-Verlag, 1996.

HAVRDA, J.; CHARVÁT, F. Quantification method of classification processes: concept of structural a-entropy. Kybernetika, v. 3, n. 1, p. 30-35, 1967.

HILBERT, D. Mathematical problems. Bulletin of the American Mathematical Society, v. 8, n. 10, p. 437-479, 1902.

INGARDEN, R.S., URBANIK, K. Information without probability. Colloquium Mathematicum, Vol. IX, 1962.

JAHNKE, H. N. A History of analysis (history of mathematics). Providence, RI: American Mathematical Society, v. 24, 2003.

KANNAPPAN, P. Functional equations and inequalities with applications. New York, NY: Springer Science & Business Media, p. 404, 2009.

KENDALL, D. G. Functional equations in information theory. Zeitschrift für Wahrscheinlichkeitstheorie und Verwandte Gebiete, v. 2, n. 3, p. 225- 229, 1964.

KHINCHIN, A. I. Mathematical foundations of information theory. Translated by SILVERMAN, R. A.; FRIEDMAN, M. D. New York, NY: Dover Publications, 1957.

(Originalmente publicado em russo em Uspekhi Matematicheskikh Nauk, v. 7, n. 3, p. 3-20, 1953 e v. 9, n. 1, p. 17-75, 1956).

KLINE, M. Les fondements des mathématiques. La recherche, v. 54, p. 200-208, 1975.

KUHN, T. A estrutura das revoluções científicas. São Paulo, SP: Editora Perspectiva, 1994.

LEE, P. M. On the axioms of information theory. The Annals of Mathematical Statistics, v. 35, n. 1, p. 415-418, 1964.

LÜTZEN, J. The foundation of analysis in the 19th century. In: JAHNKE, H. N. (Ed.). A history of analysis, American Mathematical Society, p. 155-195, 2003

MAGOSSI, J. C.; PAVIOTTI, J. R. Incerteza em entropia. Revista Brasileira de História da Ciência, v. 12, n. 1, p. 84-96, 2019.

MAGOSSI, J. C.; BARROS, A. C. DA C. A entropia de Shannon: uma abordagem axiomática. REMAT: Revista Eletrônica da Matemática, v. 7, n. 1, p. e3013, 26 maio 2021.

MOSER, S. M.; CHEN, P. N. A student’s guide to coding and information theory. Cambridge, UK: Cambridge University Press, 2012.

PIERCE, J. R. An introduction to information theory-symbols signals and noise. Dover Publications, Second Revised Edition, New York, 1980 (originally published in 1961 by Harper & Brothers).

REID, C. Hilbert-Courant. New York, NY: Springer-Verlag, 1986.

REID, C. Courant. New York, NY: Springer Science & Business Media, 2013.

RÉNYI, A. On measures of entropy and information. In: Proceedings of the Fourth Berkeley Symposium on Mathematical Statistics and Probability, Volume 1: Contributions to the Theory of Statistics, Berkeley, CA, USA, p. 547-561, 1961.

REZA, F. M. An introduction to information theory. New York, NY: McGraw-Hill, 1961.

ROGERS, H., Jr. Theory of recursive functions and effective computability. New York, NY: McGraw-Hill, 1967.

SCHUBRING, G. Conflicts between generalization, rigor, and intuition: number concepts underlying the development of analysis in 17-19th century France and Germany. New York, NY: Springer-Verlag, 2005.

SCHUTZENBERGER, M. P. On some measures of information used in statistics. In: Proceedings of the Third Symposium on Information Theory, London, UK, p. 18-25, 1955.

SHANNON, C. E. A mathematical theory of communication. Bell System Technical Journal, v. 27, p. 379-423 (July), 623-656 (October), 1948.

SHANNON, C. E. The bandwagon. IRE Transactions on Information Theory, v. 2, n. 1, p. 3, 1956.

SIPSER, M. Introduction to the theory of computation. Boston, MA: PWS Publishing Company, 1997.

SMALL, C. G. Functional equations and how to solve them. New York, NY: SpringerVerlag, 2007.

SPIVAK, D. I. Category theory for the sciences. Cambridge, MA: The MIT Press, 2014.

TRIBUS, M.; McIRVINE, E. C. Energy and information. Scientific American, v. 225, n. 3, p. 179-190, 1971.

TURING, A. M. On computable numbers, with an application to the Entscheidungs problem. Proceedings of the London Mathematical Society, v. 2, n. 1, p. 230-265, 1937.

TVERBERG, H. A new derivation of the information function. Mathematica Scandinavica, v. 6, p. 297-298, 1958.

VAN VLECK, E. B. The influence of Fourier series on the development of mathematics. Science, v. 39, n. 99, p. 113-124, 1914.

VERDÚ, S. Empirical estimation of information measures: a literature guide. Entropy, v. 21, n. 8, 720, p. 2, 2019.

VERDÚ, S.; McLAUGHLIN, S. W. Information theory: 50 years of discovery. Piscataway, NJ: IEEE Press, 2000.

WEHRL, A. General properties of entropy. Review of Modern Physics, v. 50, n. 2, p. 221-260, 1978.

WEHRL, A. The many facets of entropy. Reports on Mathematical Physics, v. 30, n. 1, p. 119-129, 1991.

WIENER, N. Cybernetics: or control and communication in the animal and the machine. Cambridge, MA: The MIT Press, 1948.

Descargas

Publicado

Métricas

Visualizações do artigo: 1774 PDF (Português (Brasil)) downloads: 715